How Cross-Checks Work

A cross-check is the core operation of Shield. It automatically queries an AI engine about your organization, extracts information from the response, and compares it to your Truth Nuggets to score accuracy.

The Process

Shield runs cross-checks in six steps:

Step 1: Generate Query

Shield automatically creates a natural-language question based on a Truth Nugget.

Example Truth Nugget:

- Fact: “TruthVouch Shield monitors 7 AI engines”

- Category: Products

Auto-generated Queries (varies each time):

- “What is TruthVouch Shield and which AI engines does it monitor?”

- “Tell me about TruthVouch’s hallucination detection capabilities”

- “What AI engines does TruthVouch integrate with?”

Query variation prevents AI engines from memorizing responses. Shield automatically uses templates and randomization to generate natural-sounding questions.

Step 2: Query AI Engine

Shield automatically sends the query to the AI engine and collects the response.

Example: Send to ChatGPT:

User: "What is TruthVouch Shield and which AI engines does it monitor?"

ChatGPT: "TruthVouch Shield is a hallucination detection platformthat monitors AI systems for inaccuracies. It integrates with major LLMproviders like OpenAI, Anthropic, Google, and others."Response is logged with:

- Full text

- Engine and model

- Timestamp

- Latency

- Any errors or API issues

Step 3: Named Entity Recognition (NER)

Shield automatically extracts entities (numbers, names, dates, products) from the AI response.

Example response:

"TruthVouch Shield is a hallucination detection platform..."Extracted entities:

- Organization: “TruthVouch”, “OpenAI”, “Anthropic”

- Product: “TruthVouch Shield”

- Percentage: “90%”, “above 90%”

- Type: “hallucination detection platform”

NER identifies what the AI said, not whether it’s right or wrong — just what was stated.

Step 4: Natural Language Inference (NLI)

Shield automatically compares the extracted entities/statements against your Truth Nugget using Natural Language Inference.

Your Truth:

- “TruthVouch Shield monitors 7 AI engines”

AI Said:

- “integrates with major LLM providers like OpenAI, Anthropic, Google”

NLI Decision:

- Is “major LLM providers” compatible with “7 AI engines”? YES

- Confidence: 96% (very confident in inference)

- Verdict: COMPATIBLE (or “ENTAILED”)

Another Example:

- Your Truth: “Founded in 2024”

- AI Said: “established in 2020”

- NLI: 2024 ≠ 2020, conflict. CONTRADICTION

- Confidence: 99%

- Verdict: CONTRADICTED (or “NEGATED”)

NLI handles paraphrases, synonyms, and logic:

- “Founded in 2024” vs “established in 2024” = COMPATIBLE

- “500+ customers” vs “over 400 customers” = COMPATIBLE

- “Available in 50 countries” vs “Available in North America only” = CONTRADICTED

Step 5: Score & Alert

Shield automatically assigns a truth score (0-100) and decides whether to alert based on your configured thresholds.

Scoring:

- ENTAILED (AI strongly matches truth): 95-100

- COMPATIBLE (AI roughly matches): 80-94

- NEUTRAL (AI doesn’t address the fact): 50-79

- CONTRADICTED (AI conflicts): 20-49

- STRONGLY CONTRADICTED (Clear falsehood): 0-19

Alert Decision:

- Score > 80: No alert (AI is accurate)

- Score 60-80: Medium alert (partial mismatch)

- Score < 60: High alert (serious hallucination)

Thresholds are configurable per account.

Example alerts:

- “ChatGPT says detection is ‘high accuracy’ (True Score: 88) - No alert”

- “Claude says founded by [Incorrect Person] (True Score: 15) - Critical alert”

- “Gemini doesn’t mention founder at all (True Score: 50) - Medium alert”

Step 6: Log & Store

Result is stored in audit trail:

Cross-Check Event├─ Timestamp: 2026-03-14 14:32:10 UTC├─ AI Engine: ChatGPT├─ Model: gpt-4-turbo├─ Truth Nugget: "Shield monitors 7 AI engines"├─ Query: "Which AI engines does TruthVouch Shield monitor?"├─ Response: "TruthVouch Shield monitors major LLM providers..."├─ Entities Extracted: ["TruthVouch", "major LLM providers"]├─ NLI Result: ENTAILED (96% confidence)├─ Truth Score: 92├─ Alert Triggered: No└─ Status: LoggedNLI Explained

Natural Language Inference (NLI) is the key to Shield’s accuracy and reliability.

What is NLI?

NLI determines whether a statement (premise) supports, contradicts, or is neutral to another statement (hypothesis).

| Premise | Hypothesis | NLI Result |

|---|---|---|

| ”TruthVouch was founded in 2026" | "TruthVouch was founded in 2026” | ENTAILED (100% match) |

| “TruthVouch was founded in early 2026" | "TruthVouch was founded in 2026” | ENTAILED (compatible) |

| “TruthVouch was founded in 2024" | "TruthVouch was founded in 2026” | CONTRADICTED (conflict) |

| “TruthVouch is a SaaS company" | "TruthVouch was founded in 2024” | NEUTRAL (unrelated) |

Why NLI vs Keywords?

Keyword matching (naive approach):

- Your truth: “Monitors 7 AI engines”

- AI says: “integrates with 8 major LLM providers”

- Keyword match: NO (different numbers)

- Alert: FALSE POSITIVE

NLI (Shield’s approach):

- Your truth: “Monitors 7 AI engines”

- AI says: “integrates with major LLM providers”

- Semantic understanding: “major LLM providers” covers 7 engines

- Result: COMPATIBLE, no alert

- Success: NLI catches nuanced relationships

NLI is semantic; it understands meaning, not just words.

How NLI Learns

Shield uses a fine-tuned NLI model trained on:

- Industry-standard NLI datasets

- Proprietary hallucination data from real-world use

- Your feedback — corrections you approve

Over time, as you use Shield, it learns your organization better.

Entity Extraction

Supported Entity Types

| Type | Examples |

|---|---|

| Organization | TruthVouch, OpenAI, Google |

| Person | Employee names, founders, executives |

| Product | TruthVouch Shield, GPT-4 |

| Location | San Francisco, USA |

| Date | March 2026, Q1 2026 |

| Number | Detection scores, $349/month, customer counts |

| Time Duration | Sub-200ms response time, 15 minutes |

| URL | https://truthvouch.com |

Confidence Scoring

Each extracted entity includes a confidence indicator (High, Medium, or Low) reflecting extraction quality. Configure minimum confidence thresholds in your dashboard to filter out noise.

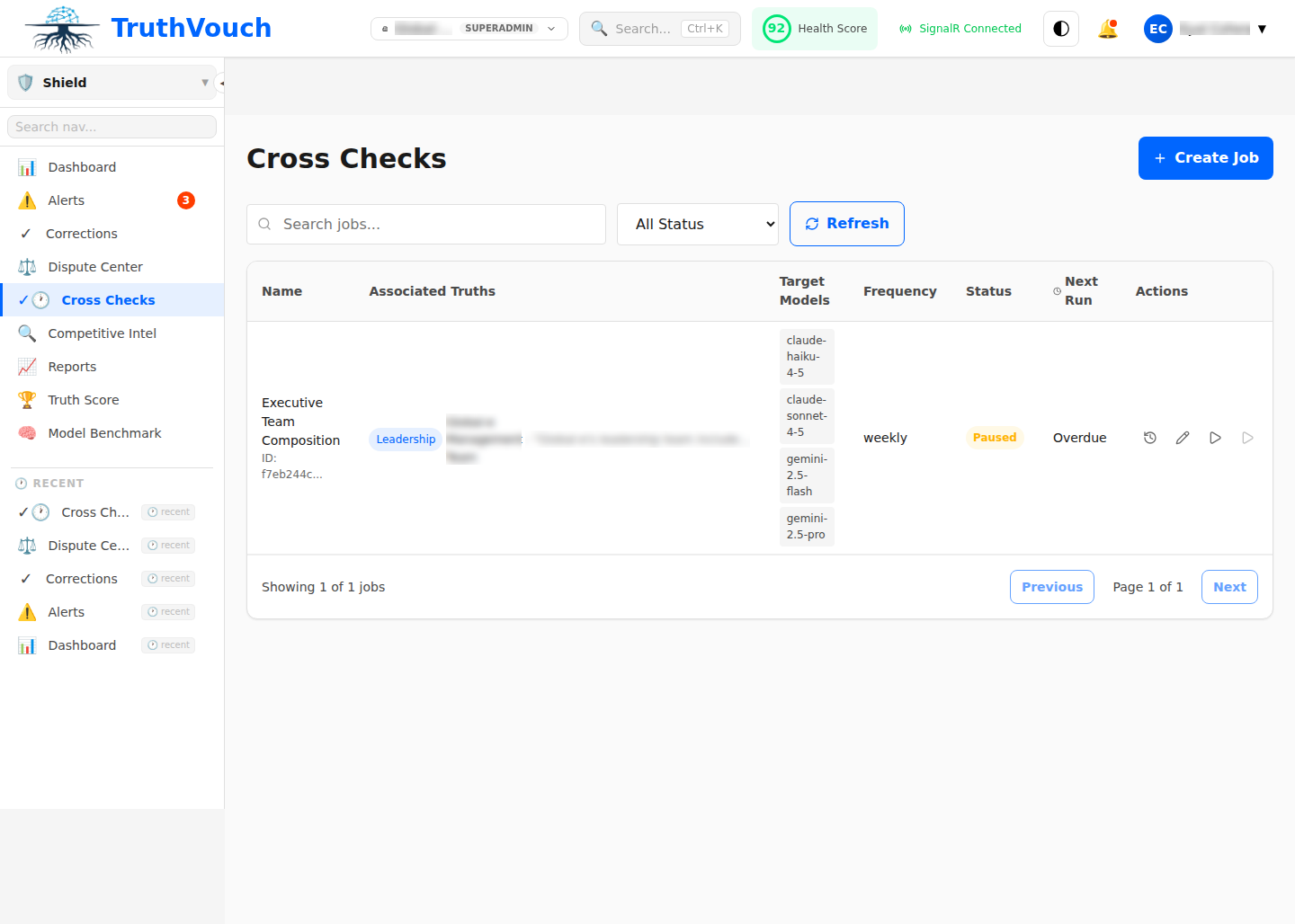

How Cross-Checks Are Scheduled

Shield doesn’t run cross-checks continuously. Instead, you set schedules.

Example Schedule:

- ChatGPT: Daily at 2:00 PM UTC

- Claude: Every 6 hours

- Gemini: Hourly (critical facts)

This balances:

- Detection timeliness: Catch hallucinations quickly

- API cost: Avoid excessive queries

- Accuracy: Enough data to spot trends

See Scheduling → for detailed configuration.

Cost & Efficiency

Each cross-check costs:

- One API query to the AI engine (minimal — depends on engine)

- One inference call to Shield’s NLI model (included in subscription)

Example cost: Monitoring 5 AI engines, daily schedule:

- 5 engines × 1 query/day = 5 queries/day

- ~150 queries/month

- For ChatGPT: ~$0.15/month (API cost) + included in subscription

More efficient than hiring people to check what AI says.

Accuracy Limitations

NLI has limits:

Where It’s Excellent (>95%)

- Clear facts (dates, people, numbers)

- Simple statements

- Direct contradictions

Where It’s Good (85-94%)

- Complex claims with multiple entities

- Paraphrases and synonyms

- Approximate numbers (“around 500” vs “500+“)

Where It’s Fair (70-85%)

- Subjective claims (“best in class”)

- Market position

- Indirect implications

Where It Struggles (<70%)

- Sentiment and tone (“loves” vs “likes”)

- Speculation and hypotheticals

- Context-dependent meanings

- Sarcasm

Mitigation: You can adjust truth nuggets to be more specific, improving detection for edge cases.

Monitoring the Process

You can see each step:

Go to: Settings → Audit → Cross-Checks

Choose any cross-check event to see:

- Query generated

- Full AI response

- Entities extracted (with confidence scores)

- NLI reasoning

- Final score and alert decision

Next Steps

- Scheduling Cross-Checks — Configure monitoring

- Interpreting Results — Understand what the numbers mean

- Query Templates — Customize queries